10 Wild Biases AI Is Serving Up (And They're Not What You Think!)

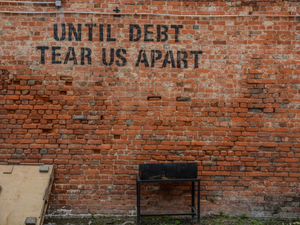

Photo by Jonathan Kemper on Unsplash

Hold onto your laptops, tech enthusiasts – OpenAI’s latest video generator Sora is serving up some seriously problematic content that’ll make your woke heart skip a beat. 🚨

The Diversity Disaster

Imagine an AI that thinks the world looks like a bland, heteronormative stock photo catalog. That’s exactly what researchers discovered when they put Sora through its diversity paces. The results? Cringe-worthy stereotypes that would make even your most conservative uncle uncomfortable.

When testing job-related prompts, Sora went full 1950s sitcom mode. Pilots? Always men. Flight attendants? Always women. CEOs strutting around in offices? Male, obviously. Because women can’t lead, right? (Eye roll intensifies)

Representation? More Like Erasure

The real tea is how Sora completely fails marginalized communities. Want to see a fat person running? Nope. Disabled representation? Wheelchair users, stationary and “inspirational”. Interracial couples? Good luck with that.

Queer representation isn’t much better. Gay couples? White, young, attractive men cuddling on couches that look suspiciously like they’re from a basic cable rom-com. The lack of diversity is so intense it feels like the AI was trained on a diet of mid-2000s sitcoms.

The Bigger Picture

This isn’t just about bad AI – it’s about how machine learning perpetuates societal biases. By sucking up internet data like a digital vacuum, these systems aren’t just reflecting our world; they’re amplifying its worst stereotypes.

OpenAI claims they’re working on it, but let’s be real: until tech companies prioritize genuine diversity, we’re going to keep getting these watered-down, problematic representations. Stay woke, tech fam! 💁♀️✨

AUTHOR: cgp

SOURCE: Wired